So there’s no confusion: I’m a digital guy.

I’ll take a CD over vinyl, cameraphone over Polaroid. When it comes to life, and filmmaking, I’m largely pro-technology, anti-Luddite. In fact, I have very little patience for aesthetes who blather on and on about the infinite advantages of the analog world, be it $10,000 turntables or [Maxivision](http://johnaugust.com/archives/2004/digital-cinema-gets-a-little-closer#comment-1449) projectors.

Give me some ones and zeroes, and I’m happy.

But in the same week, I had two experiences that pointed out the downside of my digital zeal. As things get faster, cheaper and more flexible, it becomes harder and harder to make “final” decisions.

I recently had the good fortune to visit the two motion-capture films [Robert Zemeckis](http://imdb.com/name/nm0000709/) is making: [Monster House](http://imdb.com/title/tt0385880/) and [Beowulf](http://imdb.com/title/tt0442933/). (The former is directed by [Gil Kenan](http://imdb.com/name/nm1481493/); the latter by Zemeckis himself.)

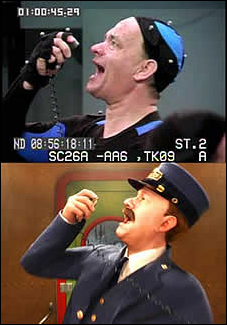

For those who missed all the stories about the motion-capture process when [The Polar Express](http://imdb.com/title/tt0338348/) came out, here’s my incredibly simplified explanation. Motion capture uses real actors, who wear special clothing (unitards, basically) outfitted with reflective dots. They have similar, smaller dots on their faces.

For those who missed all the stories about the motion-capture process when [The Polar Express](http://imdb.com/title/tt0338348/) came out, here’s my incredibly simplified explanation. Motion capture uses real actors, who wear special clothing (unitards, basically) outfitted with reflective dots. They have similar, smaller dots on their faces.

(Compared with this picture of Tom Hanks from The Polar Express, it seems the dot-to-skin ratio has shifted greatly. On the set of Beowulf, you could scarcely see the actors beneath all the mo-cap dots.)

Rather than filming with traditional cameras, the crew uses special sensors that record the location of each dot in space, from multiple angles.

Computers then transform this data into 3-D models. The actors are performing on an empty stage; there are no sets or props or costumes until later in the process, when animators map this information onto the wireframes. So “motion capture” means just that — you’re capturing every movement made by the actors, from big (swinging a sword) to small (a sneer). Special sensors even record each eye-blink.

While he’s on the set, working with the actors, all the director has to worry about is the performances. It’s more like directing theatre than a movie. It’s only afterwards that he sits down to “shoot” the movie.

At first listen, this sounds a lot like how George Lucas shot the last three Star Wars movies, with actors working against green screens. But it’s actually quite a bit different. Lucas is filming the actors; Zemeckis is simply capturing the information. Most notably, Zemeckis doesn’t even have to decide where to put the camera. Sitting at a computer months from now, he can pick any angle. He could play a scene in close-ups, or wide shots, or have the “camera” do impossible moves. He could decide to make the movie 3-D. There are really no limits.

And this is the biggest potential problem with motion capture. With nearly infinite options, how does the director decide what he wants? Is there such a thing as too much choice?

These thoughts were on my mind as I went to [ResFest](http://www.resfest.com/) at the Egyptian theatre in Hollywood. The film festival, which visits five cities each year, focuses on digital filmmaking, be it video, animation or hybrids of the two.

I specifically wanted to see the presentation about Panasonic’s new hi-def camcorder, the [AG-HVX200](http://tinyurl.com/cd99l). Rather than recording to tape, it records to P2 cards, which are basically four SD chips arranged in an array, with the form factor of a PCMCIA card. The cards are expensive, but they’re not really for long-term storage. The idea is that you immediately dump the footage onto your hard drive, wipe the card, and re-use it. In that way, it’s very much like using a digital still camera.

It’s definitely the camera I wish I had in film school. For a certain level of independent film, I think it will be a godsend.

I’d rate the audience for the presentation at about Geek Factor 7, with a fair number of nines and tens. During the Q&A, the second question was about the “true” resolution of the recording chip, which the presenter somewhat snippily declined to answer. I guess I sympathize. That’s sort of a “When did you stop beating your wife?” question. The raw numbers will never match the processed result, which leads to inevitable grumbling about how the camera doesn’t live up to its potential.

Anyway.

The most annoying question came from a guy sitting behind me. I didn’t turn to look, but in my head, I immediately conjured the image of Comic Book Guy from The Simpsons. He took great umbrage at the presenter’s suggestion that one advantage of recording to P2 is that you can delete worthless takes in the field, freeing up more space on the card.

The most annoying question came from a guy sitting behind me. I didn’t turn to look, but in my head, I immediately conjured the image of Comic Book Guy from The Simpsons. He took great umbrage at the presenter’s suggestion that one advantage of recording to P2 is that you can delete worthless takes in the field, freeing up more space on the card.

That’s heresy, he said, and irresponsible. You might need one of those 18 flubbed takes. I was alarmed at the passion of his conviction. He went on to say that he owned a post-production house, with several terabytes of storage at each workstation. So he would transfer everything.

Dude, I’m so happy you have so much storage. Maybe it can hold your ego. But I don’t think you understand how real filmmaking works.

We’ve all heard stories about how a director will shoot 20 takes of the same scene. What’s less often reported is the director doesn’t bring all 20 takes with him into the editing room. To understand why, we need to explain a little about film.

Film is expensive.

Okay, that was a short lesson. But that’s really the gist of it. When you’re shooting with film, you’re not only paying for the celluloid that runs through the camera, but also the processing of the negative, and the transfer ([telecine](http://en.wikipedia.org/wiki/Telecine)) that lets you bring it into the editing system. All of that costs money.

So when he’s finished shooting a scene from a given angle, the director tells the script supervisor, “Print 3, 5 and 7.” That is, tell the lab that we only want takes 3, 5, and 7. The rest of the film negative will be processed and stored, but the other 13 takes won’t be given to the editor. (In case of emergency, such as an unforeseen glitch in the printed takes, the editor may occasionally have the lab go back and print alternate takes. But this is rare, and costly.)

Note that directors will sometimes say, “Print everything.” This will incur the wrath of the producer, who watches the film processing budget soar.

So what Comic Book Guy failed to understand is that filmmaking traditionally hasn’t transferred everything. Many decisions are made in the field. Permanently deleting a take from the camera may be more extreme, but it’s not sacrilege. In many cases, it makes sense. Anyone who’s ever snapped a self-portrait with their cameraphone knows that the delete button gets almost as much use as the shutter.

Both the Zemeckis tour and Comic Book Guy’s misguided rant reminded me of a book I read a few months ago, [The Paradox of Choice](http://www.amazon.com/exec/obidos/tg/detail/-/0060005696/) by Barry Schwartz. As consumers, we’re conditioned from a young age to think that the more options you have, the better. But that’s not really the case. Study after study shows that the more choices you offer someone, the less happy they are with their ultimate decision.

Both the Zemeckis tour and Comic Book Guy’s misguided rant reminded me of a book I read a few months ago, [The Paradox of Choice](http://www.amazon.com/exec/obidos/tg/detail/-/0060005696/) by Barry Schwartz. As consumers, we’re conditioned from a young age to think that the more options you have, the better. But that’s not really the case. Study after study shows that the more choices you offer someone, the less happy they are with their ultimate decision.

That’s because we have a desire to optimize: we want to know we’ve made the best pick. But we psych ourselves out. The more options there are, we know it’s less likely that we’ve made the ideal choice. A restaurant is a good example. If the menu only has eight things, there’s a pretty good chance you’ll know which one you want. It’s a quick decision. But if the menu has eighty things (think Cheesecake Factory), it’s a much more complicated decision-making process. Schwartz would argue you’d be less happy with the exact same meal in the second scenario. I think he’s right. The restaurant patron who says, “I want a salad” before he opens the menu is likelier to have a good meal.

I was a vegetarian for seven years. At most restaurants, there was exactly one thing I could order. And I was happy.

Coming back to digital filmmaking, I think this paradox of choice is one of the biggest challenges facing the industry.

Zemeckis has made a lot of movies, so I’d assume he’s able to make up his mind pretty quickly and decisively about what angles he wants to use. But a filmmaker with less experience could find himself paralyzed — or worse, beholden to outside influences (like the studio) pushing for more close-ups, new shots, or whatever. It’s hard to turn someone down when they ask, “Why not give it a try?”

I’ve already seen this happening in the editing room, where the rise of non-linear editing systems like Avid and Final Cut Pro has made it possible to work much more quickly. As the guy sitting at the right hand of the editor, I’ve definitely benefited, but it’s had a dispiriting effect on the editors themselves. They’re no longer the arbiters and gatekeepers they once were. Ironically, they’re a lot more like screenwriters now, where nearly everyone can offer an opinion on what should be changed — and too often, does.

So what’s the solution?

Self-discipline is a start. The director who only prints the takes he actually intends to use is making his life much easier. I think the [Dogma](http://en.wikipedia.org/wiki/Dogme_95) philosophy is just an expansion (or, reduction) of that instinct. By depriving yourself of certain things, you can focus more closely on what’s left.

But the bigger need is to properly value the most precious resource in filmmaking: creative thought. It doesn’t show up on any budget, but it’s the single biggest factor in whether a film will be great.

Presenting a filmmaker with 100 options isn’t a help, but a hindrance. It means she has to consider 100 possibilities, or devise some system for winnowing them down into categories. That’s creative brainpower she could spend on some other, more important aspect of the film. Worse, the 99 unchosen possibilities will still weigh on her mind. In many ways, she was better off not knowing what she was missing.

Again, I’m a digital guy. But I think one of the best aspects of digital is its binary nature: yes or no, black or white, one or zero. To flourish, I think digital filmmaking needs to embrace some of this discipline.

We shouldn’t use technology simply to push back the decision-making process. Rather than cheering, “Anything is possible!” we should celebrate that “New things are possible.” The groundbreaking movies of the next decade won’t be the ones that use the most technology, but rather the ones that use it most intelligently.